Introduction

Organizational factors drove the Three Mile Island, Chernobyl, and Fukushima Dai-ichi reactor accidents as much as technical failures did. This established fact drives the nuclear industry's intense focus on safety culture assessment—a structured, multi-method process that evaluates the shared values, behaviors, and norms governing how safety is prioritized at nuclear facilities, from commercial power plants to DOE contractor sites.

While "safety culture assessment" appears throughout regulatory guidance from the NRC, IAEA, INPO, and DOE, the operational process for conducting assessments that produce valid, actionable results is rarely explained with sufficient rigor. That gap matters. The NRC's 2011 Safety Culture Policy Statement explicitly declares it is "not a regulation or rule" and "cannot be considered binding upon, or enforceable against" licensees—meaning assessment quality depends on industry self-governance and professional standards, not regulatory enforcement.

A 2003 independent evaluation at Davis-Besse found that five essential safety culture characteristics were "not yet clearly evident" following the reactor vessel head degradation event, revealing a "very top-down" decision-making culture and "mixed messages" about safety versus production priorities. What the industry learned from Davis-Besse still applies: detecting latent culture failures requires methodological rigor—not just a survey and a summary report.

TLDR

- Safety culture assessment combines surveys, interviews, behavioral observations, and document reviews—not just a perception survey

- The process follows four stages: scoping/planning, data collection, analysis/interpretation, and reporting with action planning

- Key frameworks: INPO's 10 traits, NEI 09-07, NRC policy statement, IAEA guidance, and DOE Guide 450.4-1C

- Biennial formal assessments are the industry standard, supplemented by continuous monitoring panels

- Common pitfalls: survey over-reliance, unvalidated instruments, low response rates, and assessments that produce no behavioral action plans

What Is a Nuclear Safety Culture Assessment and Why It Matters

A nuclear safety culture assessment is a formal, evidence-based review of the degree to which an organization's collective values, leadership behaviors, and workforce norms support safe performance. Assessments are evaluated against established frameworks such as INPO's traits of a healthy nuclear safety culture or the NRC's safety culture policy statement components.

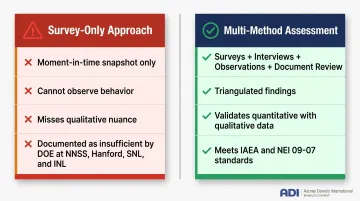

Assessment vs. Survey: A Critical Distinction

Many organizations treat "safety culture assessment" and "safety culture survey" as interchangeable. They aren't. A survey is one quantitative instrument measuring employee perceptions at a point in time. An assessment is the broader process — incorporating surveys, structured interviews, behavioral observations, and document reviews — to reach evidence-based conclusions about culture health.

The DOE Office of Enterprise Assessments documented this gap at multiple contractor sites. Each relied too heavily on surveys without sufficient qualitative methods:

The INL assessment specifically found the organization "has not recently conducted a laboratory-wide safety culture assessment" using the accepted combination of methods — a direct consequence of treating a survey as a substitute for a full assessment.

Why Nuclear Requires Formal Assessments

Nuclear environments demand formal safety culture assessments because culture failures are severe and often latent — they accumulate quietly before manifesting as incidents. The NRC does not directly mandate assessments through regulation, but strongly expects them through policy guidance.

Industry bodies like INPO conduct evaluations carrying significant operational weight. Note that specific INPO evaluation outcomes at individual facilities are restricted to member organizations. In practice, this means licensees and DOE contractors must proactively demonstrate safety culture health through credible assessment processes — waiting for regulatory pressure is not a viable strategy.

What "Healthy" Nuclear Safety Culture Looks Like

INPO 12-012 defines 10 traits of a healthy nuclear safety culture organized into three categories:

Individual Commitment to Safety:

- Personal accountability for safety decisions

- Questioning attitude that challenges assumptions

- Effective safety communication across all levels

Management Commitment to Safety:

- Leadership accountability demonstrated through field presence and resource allocation

- Sound decision-making balancing safety with competing goals

- Respectful work environment free from harassment

Management Systems:

- Continuous learning from industry operating experience

- Rigorous problem identification and resolution

- Environment for raising concerns (Safety Conscious Work Environment)

- Work processes that embed safety into operations

These traits form the benchmarks against which assessment findings are evaluated. The NRC's 2011 Policy Statement defines a parallel set of 9 traits with substantial overlap.

How the Nuclear Safety Culture Assessment Process Works

A nuclear safety culture assessment moves through four distinct phases—scoping and planning, data collection, analysis and interpretation, and reporting with action planning. NEI 09-07, Revision 1 confirms that independent assessments are typically completed within a defined assessment window, often one to two weeks for external independent assessments.

Phase 1: Scoping and Planning

Effective assessments begin by defining scope: which organizational units, workforce populations, and safety culture focus areas will be evaluated. Critical scoping decisions include:

- Organizational boundaries: Single facility, multiple sites, specific departments

- Workforce segments: Operations, maintenance, engineering, craft workers, contractors

- Focus areas: All INPO traits or targeted attributes based on prior findings

- Assessment team composition: Internal self-assessment, partially independent, or fully external

The level of independence directly affects finding credibility. Following the Davis-Besse reactor vessel head degradation event, FirstEnergy committed to an independent evaluation conducted by external consultants as a condition of restart—recognizing that self-assessment lacked sufficient objectivity.

During scoping, assessment teams establish methodology, select data collection instruments, develop interview guides mapped to safety culture attributes, and communicate the upcoming assessment to the workforce. Pre-assessment communication builds trust and improves participation rates—NEI 09-07 emphasizes that nuclear safety culture "depends on every employee," naming field technicians, security officers, and supplemental workers.

Phase 2: Data Collection

Data collection deploys three primary methods simultaneously:

Quantitative surveys distributed across workforce segments, measuring employee perceptions using validated question sets mapped to safety culture attributes. Critical consideration: ensuring craft workers and employees without regular computer access participate. DOE identified Hanford WTP's use of electronic audience response systems ("clickers") as a best practice, allowing craft workers to respond anonymously during scheduled sessions.

Structured interviews conducted with leadership and representative worker samples at all levels. Interviews are the most diagnostically rich method—skilled interviewers probe for nuances of trust, leadership commitment, and Safety Conscious Work Environment that surveys cannot capture. Best practices include avoiding double-barreled questions (asking about two issues simultaneously, which produces uninterpretable responses) and ensuring demographic stratification across job categories, shifts, and tenure.

Behavioral observation during scheduled activities such as pre-job briefings, operations meetings, shift turnovers, and unplanned moments. Direct observation provides objective, real-time evidence of whether stated safety values appear in practice—distinct from self-reported perceptions. Behaviors are the observable manifestation of culture and the most reliable indicator of culture health.

Organizations applying Applied Behavior Analysis frameworks gain a structured lens for coding and interpreting observations in ways that inform reinforcement and correction strategies.

Document and record review analyzing corrective action program records, near-miss reports, deviation trends, employee concern program data, and previous assessment findings. These records reveal whether the organization actually acts on safety concerns raised by workers.

Phase 3: Analysis and Interpretation

Assessment teams triangulate findings across data streams: quantitative survey results are compared against qualitative interview themes and behavioral observation notes. Where sources align, conclusions carry greater weight. Where they conflict, the discrepancy itself becomes a finding worth investigating.

A monitoring panel or cross-functional review group interprets results, bringing diverse organizational perspectives to culture data and reducing the risk that a single assessor's bias skews conclusions. Demographic stratification in analysis matters precisely because aggregate data can mask significant subculture differences. The DOE EA assessment at SNL found that the organization "did not collect sufficient qualitative information" and lacked documented processes for combining quantitative and qualitative sources.

Phase 4: Reporting and Action Planning

Assessment reports must document methodology clearly enough for results to be replicated, explain findings in plain language (not just bar charts), and distinguish between strengths, areas needing attention, and critical concerns mapped to specific safety culture attributes.

Findings should translate into a formal improvement plan that includes:

- Assigned accountability for each improvement area

- Measurable behavioral targets (not just attitude goals)

- A defined reassessment timeline to verify progress

Without these elements, the report becomes a compliance artifact rather than a change tool—a pattern DOE identified at multiple contractor sites, where findings were documented but never acted upon.

Key Assessment Methods: Surveys, Interviews, and Behavioral Observation

Surveys: Quantitative Instruments

Well-designed safety culture surveys measure employee perceptions across defined culture attributes using validated question sets. Psychometric validity is critical—the DOE EA assessment at Hanford WTP found that survey questions were significantly altered from validated instruments without re-evaluating psychometric properties. At INL, 78% of questions changed between 2021 and 2023 deployments. That scale of revision makes it impossible to determine whether result changes reflected actual cultural shifts or simply different question interpretations.

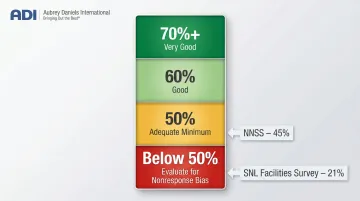

Minimum response rate thresholds from the EFCOG Guide to Safety Culture Evaluation (2015):

| Response Rate | Rating |

|---|---|

| 70%+ | Very good |

| 60% | Good |

| 50% | Adequate minimum |

| Below 50% | Evaluate for nonresponse bias |

DOE found NNSS averaged 45% and SNL's Facilities Survey achieved only 21%—both below the minimum considered representative.

Structured Interviews: Qualitative Depth

Survey data tells you what people think. Interviews reveal why—and that distinction matters enormously in nuclear safety culture work. Skilled interviewers probe for nuances of trust, leadership commitment, and Safety Conscious Work Environment that quantitative instruments cannot surface.

Best practices for interview question design:

- Map questions to specific safety culture traits and attributes

- Avoid double-barreled questions

- Use open-ended questions that invite narrative responses

- Include both individual contributors and leadership

- Stratify by job category, shift, department, and tenure

The 2003 Davis-Besse evaluation used extensive interviews to reveal that staff perceived "mixed messages" regarding priority of safety over production—a finding unlikely to emerge from survey data alone.

Behavioral Observation: Objective Evidence

Where interviews capture perception, direct observation captures reality. Watching leaders and workers during actual tasks provides objective evidence of whether stated safety values hold up under operational pressure—something no survey or interview can replicate.

Observation schedules should include:

- Planned activities: pre-job briefings, operations meetings, shift turnovers

- Unplanned moments: hallway conversations, break room interactions

- Leadership field presence: how often, how long, what behaviors demonstrated

- Worker responses: questioning attitude, stop-work authority, peer-to-peer feedback

Applied Behavior Analysis (ABA) frameworks provide a structured methodology for coding and interpreting what observers see. ABA-trained practitioners can identify whether safe behaviors are being positively reinforced or quietly extinguished—and design targeted interventions accordingly. ADI's Safe by Accident, co-authored by Judy Agnew, offers a practical model for applying these principles to workplace safety observation programs.

Multi-Stream Monitoring Panels

Best-practice organizations do not rely solely on biennial assessments—they operate ongoing monitoring panels that integrate diverse data streams on a routine basis:

- Survey results (pulse surveys between formal assessments)

- Self-assessments and performance metrics

- Corrective action program trends

- Employee concern program data

- Behavioral observation records

- NRC inspection findings

NEI 09-07 recommends Nuclear Safety Culture Monitoring Panels meet 3-4 times per year, with Site Leadership Teams reviewing culture data at least twice annually. This continuous monitoring model catches cultural drift between formal assessment cycles.

Best Practices for a Reliable Nuclear Safety Culture Assessment

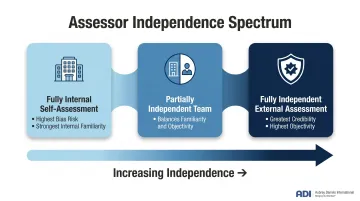

Ensure Assessor Independence and Mitigate Bias

Purely self-directed assessments carry the "fox guarding the henhouse" risk—organizations may unconsciously overlook cultural weaknesses. The spectrum of options includes:

- Fully internal self-assessment: Builds internal capability but carries the highest risk of blind spots and confirmation bias

- Partially independent teams: Combines internal members with external experts to balance familiarity and objectivity

- Fully independent external assessments: Delivers the greatest credibility and objectivity, especially under regulatory scrutiny

The appropriate choice depends on purpose, facility history, and regulatory context. Following significant safety events or periods of heightened NRC oversight, fully independent external assessments carry greater credibility. Even internal teams benefit from structured independence protocols such as direct reporting to the Chief Nuclear Officer rather than site leadership.

Apply Demographic Stratification in Sampling

Representative sampling requires deliberate stratification by job category, department, shift, and tenure—not just headcount proportions. Subcultures within the organization (craft workers, operations, maintenance, engineering, contractors) must each be adequately represented.

Broad demographic groupings can mask significant safety culture problems in specific subcultures. For example, if aggregate survey results show favorable safety culture perceptions but craft workers on night shift hold significantly less favorable views, the aggregate data obscures a critical finding.

Communicate Transparently with the Workforce

Organizations that share upcoming assessment plans, explain methodology to employees, and publish results—including areas for improvement—build the psychological safety and trust that are themselves indicators of healthy safety culture.

Senior leadership visibility in communicating assessment outcomes reinforces the message that safety culture is taken seriously.

Conversely, organizations that conduct assessments behind closed doors and publish only sanitized summaries signal that culture assessment is a compliance exercise rather than a genuine commitment.

Benchmark and Reassess on a Defined Cadence

NEI 09-07 confirms that independent nuclear safety culture assessments are performed biennially (every two years). This cadence is the industry standard for both commercial nuclear and DOE environments, supplemented by continuous monitoring throughout the year.

Benchmarking against peer organizations and industry working groups (such as EFCOG safety culture subtask groups) imports effective practices and prevents the kind of insularity that lets cultural drift go undetected. Without periodic formal assessment, gradual shifts in norms and behaviors can go unnoticed until they become serious problems.

Translate Findings into Behaviorally Specific Action Plans

Assessment reports lose their value if they produce only general cultural conclusions. Effective action planning specifies:

- Which specific behaviors need to change and in what context

- Which level of the organization owns the change (executive, supervisory, or frontline)

- What reinforcement mechanisms will support the change (recognition, feedback, consequences)

- What leading behavioral metrics will track progress, not just lagging outcome data

Organizations that partner with behavioral science consultants gain structured methodologies for designing reinforcement systems that make culture improvements sustainable. ADI, for instance, has spent over 45 years applying Applied Behavior Analysis in nuclear and industrial settings, translating assessment findings into reinforcement system redesigns that change actual behavior patterns rather than just producing recommendations on paper.

Common Pitfalls and Misconceptions in Nuclear Safety Culture Assessments

Over-Reliance on Surveys as Sole Assessment Instrument

A survey captures a moment-in-time snapshot of perceptions. It cannot observe behavior, probe intent, or surface what employees won't say on paper—and its value is only as strong as the validity of the questions themselves. Organizations that treat survey results as the definitive measure of safety culture health routinely miss emerging problems that interviews and direct observations would catch.

DOE's pattern finding across NNSS, Hanford WTP, SNL, and INL demonstrates the insufficiency of survey-only approaches. The INL assessment specifically recommended enhancing methodology through "periodic use of safety culture assessments involving a combination of surveys, interviews, focus groups, and team observations."

IAEA Safety Reports Series No. 83 and NEI 09-07 both confirm that multi-method approaches are the accepted standard—both standards explicitly flag single-method approaches as a weakness.

Confusing Assessment Activity with Culture Improvement

Conducting an assessment is not itself a culture improvement activity—it is a diagnostic tool. Actual improvement requires follow-through on action plans, visible leader behavior change, and redesigning the reinforcement systems that drive daily habits.

Organizations that cycle through assessments without executing behavioral changes are engaging in compliance theater rather than genuine culture development. The Davis-Besse evaluation found staff skepticism that safety commitment would be sustained post-restart—a warning sign that assessment activity had substituted for the harder work of lasting change.

Treating Assessments as Periodic Compliance Events Rather Than Continuous Monitoring

The "biennial reset" pattern—where an organization intensively focuses on culture for the weeks surrounding a formal assessment, then reverts to prior patterns—represents a failure to integrate safety culture monitoring into ongoing operations.

Contrast this with the monitoring panel model, where safety culture data is actively reviewed on a routine basis throughout the year. NEI 09-07 recommends Nuclear Safety Culture Monitoring Panels meet quarterly, reviewing:

- CAP trends and corrective action patterns

- ECP (Employee Concerns Program) data

- Self-assessment results

- Operational performance metrics

This continuous monitoring model allows earlier detection of cultural drift before it manifests as performance problems or safety events.

Frequently Asked Questions

What is the difference between a nuclear safety culture assessment and a safety culture survey?

A survey is a single quantitative instrument measuring employee perceptions at a moment in time. An assessment is the broader multi-method process—including interviews, observations, and document review—that uses survey data as one input among many to evaluate overall culture health.

How often should nuclear facilities conduct safety culture assessments?

Biennial formal assessments are the widely accepted standard in both commercial nuclear (per NEI 09-07) and DOE environments, supplemented by continuous monitoring through panels, self-assessments, and performance metric reviews throughout the year.

Who should conduct a nuclear safety culture assessment—internal teams or external assessors?

The appropriate choice depends on purpose and context: self-assessments build internal capability but carry bias risk; partially or fully independent external assessments provide greater credibility and objectivity, particularly when regulatory scrutiny is elevated or following significant safety events.

What are the INPO traits of a healthy nuclear safety culture?

INPO 12-012 defines 10 traits across three categories:

- Individual Commitment to Safety — personal accountability, questioning attitude, safety communication

- Management Commitment to Safety — leadership accountability, decision-making, respectful work environment

- Management Systems — continuous learning, problem identification/resolution, raising concerns, work processes

How is behavioral observation used in nuclear safety culture assessments?

Behavioral observation means watching leaders and workers during real activities—meetings, briefings, field operations—to assess whether safety values show up in practice. It provides objective evidence that surveys cannot capture, exposing gaps between stated culture and what actually happens on the floor.

What happens after a nuclear safety culture assessment is completed?

Findings should be communicated transparently to the workforce, mapped to specific improvement actions with assigned accountability, and tracked through a defined reassessment cycle. Structured action planning with clear ownership and a defined reassessment cycle is what turns findings into real culture change.