Introduction

Many enterprises conduct annual safety surveys — but few translate the findings into measurable changes in frontline behavior. Workers notice when nothing happens after they complete a survey, and over time, that silence erodes trust and participation. Organizations end up collecting data year after year without addressing the cultural and behavioral signals that predict incidents before they occur.

The core problem with most safety surveys is that they measure lagging indicators — injury counts, lost-time incidents, workers' compensation claims. These metrics tell you what already went wrong, not why it happened or when the next incident is likely. Surveys that drive real improvement capture behavioral and cultural signals instead: whether supervisors act on hazard reports, whether employees feel safe speaking up, and whether safety concerns get acknowledged at all.

That shift in focus — from counting incidents to reading culture — is where the difference between a compliance exercise and a genuine safety tool is made.

The 10 practices below cover what enterprise safety leaders and behavioral scientists have identified as the most reliable ways to design, deploy, and act on safety surveys — so the data you collect actually changes behavior on the floor.

TL;DR

- Safety surveys work only when they capture behavioral and cultural signals, not just incident tallies

- Anonymity, question design, and survey frequency directly affect response quality and honesty

- Frontline workers must help design surveys and review results to maximize buy-in

- Sharing results and visible action plans is what turns surveys into culture-building tools — not compliance checkboxes

- Reinforcement strategies — not policy updates alone — drive lasting behavioral change after surveys close

Why Employee Safety Surveys Matter for Modern Enterprises

An employee safety survey is a structured tool for measuring safety climate — the shared perceptions, attitudes, and behavioral norms employees hold about safety — rather than tracking incident reports or meeting OSHA recordkeeping requirements. Unlike compliance audits or post-incident investigations, safety surveys capture what workers see, feel, and believe about safety every day.

This distinction matters because surveys measure leading indicators, while injury reports measure lagging indicators. Lagging indicators — injury rates, workers' comp claims — tell you what went wrong after the fact. Leading indicators predict what could go wrong before it does. Examples include:

- Employee willingness to report hazards

- Supervisor responsiveness to safety concerns

- Perceived management commitment to safety

Research from a 2016 Yale study found that each one-percentile-point increase in safety climate scores was associated with a decrease of 13.61 work-related injuries and illnesses per 10,000 employees. In a systematic review of 56 studies, 90% of research using contractor injury records found a significant negative relationship between safety climate scores and injuries.

That relationship between perception and outcome is why survey design and follow-through matter — the data is only useful if the process earns honest responses and drives real action.

The 10 Best Employee Safety Survey Practices

Practice 1: Design Surveys Around Behavioral Indicators, Not Just Incident History

Surveys built primarily around incident counts or near-miss tallies are retrospective — they measure what has already gone wrong. Instead, questions should assess observable behaviors and conditions in real time. Do employees see supervisors modeling safe practices? Do workers feel comfortable reporting a hazard without fear of retaliation? These are behavioral indicators that reflect safety climate, not just lagging safety metrics.

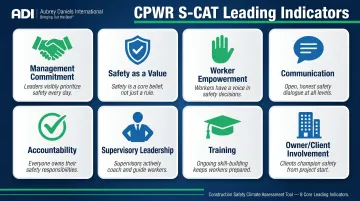

CPWR's Safety Climate Assessment Tool (S-CAT) provides a validated framework based on eight leading indicators:

- Demonstrating management commitment

- Aligning and integrating safety as a value

- Ensuring accountability at all levels

- Improving supervisory leadership

- Empowering and involving workers

- Improving communication

- Training at all levels

- Encouraging owner/client involvement

These dimensions provide a proven, psychometrically validated foundation for what to measure. Questions grounded in frameworks like S-CAT move beyond incident history and focus on the day-to-day behaviors and conditions that drive safety performance.

Practice 2: Guarantee True Anonymity — and Prove It

Perceived anonymity is not the same as actual anonymity. A 2025 Avetta survey found that 68% of U.S. workers regularly notice safety hazards, yet 72% choose not to report them — and 29% fear repercussions for reporting.

If workers suspect their responses can be traced back to them, they will self-censor, especially in small teams or when surveys are administered by direct supervisors.

True anonymity requires:

- Third-party survey platforms that separate responses from identifiable data

- Minimum response thresholds (e.g., at least 5 respondents per unit) before results are shared

- Clear, written communication about data handling, storage, and who has access

- Transparent policies that reinforce OSHA Section 11(c) protections against retaliation

When workers trust the process is genuinely anonymous, response quality improves — and the data becomes actionable rather than performative.

Practice 3: Use a Balanced Mix of Quantitative and Open-Ended Questions

Likert-scale questions ("My supervisor takes safety concerns seriously: 1–5") generate trackable, comparable data across time periods and departments. They tell you where problems exist. Open-ended questions surface the specific context and language workers use to describe hazards or cultural problems. They tell you why problems exist.

Both formats are necessary. Quantitative scores allow you to benchmark performance and measure progress. Open-ended responses reveal the specific incidents, behaviors, or conditions behind those scores.

Caution: Surveys that are entirely Likert-based can produce high average scores that mask critical dissent or department-level risks. A practical ratio to start with is roughly 70% structured/quantitative to 30% open-ended.

Practice 4: Benchmark Survey Design Against Established Safety Climate Frameworks

Don't build safety surveys entirely from scratch. Established frameworks — OSHA's Recommended Practices for Safety and Health Programs, CPWR's 8 leading indicators, or ICAO's SMS components — provide validated question categories and ensure coverage of the most predictive safety dimensions.

Using benchmarked frameworks also enables comparison with industry norms and peer organizations. Commonly validated question categories include:

- Management commitment to safety — resources, communication, visible leadership

- Supervisory safety support/leadership — coaching, modeling, responsiveness

- Coworker safety support — peer accountability, mutual reinforcement

- Safety training adequacy — quality, relevance, frequency

- Hazard reporting environment — psychological safety, follow-through on reports

- Safety communication — two-way information flow

These dimensions have been tested and refined across hundreds of studies and thousands of organizations — which is why building from them beats starting from a blank page.

Practice 5: Segment and Analyze Results by Department, Shift, and Role

Enterprise-level aggregate scores can be misleading. A strong overall safety climate score can mask a dangerously low score in a specific shift, location, or job category. Results should always be broken down by operational unit to identify where action is needed most.

Set minimum group sizes (typically 5–10 respondents) to protect anonymity while still enabling meaningful segmentation. This granularity is what makes surveys actionable rather than vague and hard to act on. If third-shift warehouse workers consistently score management commitment 30% lower than first-shift office staff, that's a targeted intervention opportunity — but only if you segment the data.

Practice 6: Survey at the Right Cadence — Match Frequency to Purpose

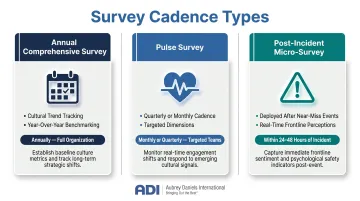

Different survey types serve different purposes:

- Annual comprehensive surveys — suitable for measuring cultural trends and year-over-year progress

- Pulse surveys — shorter, quarterly or monthly check-ins targeting specific safety dimensions

- Post-incident micro-surveys — deployed after a near-miss or safety event to capture frontline perceptions in real time

Qualtrics research from 2022 found that 77% of employees want to provide feedback more than once per year, with the majority preferring approximately four times annually. However, over-surveying without visible action destroys participation rates and employee trust.

Survey fatigue is not just about frequency — it's about follow-through. If workers provide input but see no changes, they stop participating. Cadence must be matched to organizational capacity to analyze, act on, and communicate results.

Practice 7: Involve Frontline Workers in Survey Design

Surveys designed entirely by safety managers or HR without frontline input often use language or reference scenarios that don't reflect workers' daily reality. OSHA's Recommended Practices explicitly call for worker participation in all aspects of safety program evaluation.

Include a step where frontline employees review draft questions for:

- Clarity and readability

- Relevance to actual work conditions

- Missing topics or blind spots

Workers who help design the survey are more likely to complete it. They're also more likely to interpret the process as genuine rather than performative — which directly affects the candor of their responses.

Practice 8: Communicate Results Transparently and Promptly Back to Employees

"Closing the feedback loop" means publishing survey results — including low-scoring areas — to all participants within 30–60 days post-survey. Use formats accessible across employee levels: dashboards, team briefings, visual boards on the floor.

Workers who never see results assume nothing will change, which directly depresses participation in future surveys. Only 7% of employees say their company acts on feedback "really well," while 92% believe it is important for companies to listen. When companies do act on feedback well, employee engagement more than doubles.

Silence sends a signal: your input doesn't matter. Publishing results — even uncomfortable ones — is the single fastest way to demonstrate that the survey was worth completing.

Practice 9: Build Specific, Time-Bound Action Plans Directly from Survey Findings

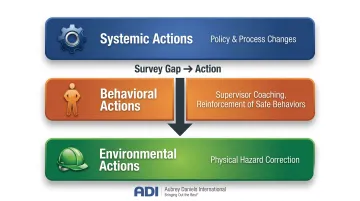

The most common failure point in safety surveys is the gap between data collection and action. Every identified gap in the survey should map to:

- A specific corrective action

- An owner

- A target deadline

Distinguish between:

- Systemic actions — policy or process changes

- Behavioral actions — supervisor coaching, reinforcement of specific safe behaviors

- Environmental actions — physical hazard correction

Tie low-scoring survey items to targeted interventions rather than generic safety awareness campaigns. Then communicate what changed as a direct result of specific feedback. That visible connection between employee voice and organizational response is what keeps workers participating in future surveys.

Practice 10: Train Supervisors to Respond Constructively to Safety Feedback

Supervisors are the most proximal influence on day-to-day safety behaviors. If supervisors react defensively to poor safety scores, punish workers who report hazards, or dismiss safety concerns raised in open-ended responses, the survey process actively harms safety culture.

Supervisors need training on how to:

- Acknowledge concerns without defensiveness

- Co-create action items with their teams

- Follow up on previously reported issues

ADI's Safety Leadership training builds these specific capabilities — including how to deliver positive and constructive feedback on safety behaviors and how to build the trusting relationships that make safety conversations stick.

Common Pitfalls That Undermine Safety Survey Effectiveness

The Compliance Checkbox Trap

Organizations often deploy safety surveys primarily to satisfy regulatory requirements or audit expectations, with no infrastructure in place to analyze or act on results. This is actively counterproductive — it signals to employees that their input is not valued and erodes participation and trust over time. Research shows that 36% of workers believe reporting is pointless because they think nothing will change.

Survey Fatigue from Lack of Action

This problem compounds when surveys repeat without visible follow-through. Response rates decline, and the employees who do participate provide less thoughtful answers. As Gallup research notes, conducting a survey with no action on results will likely decrease engagement and increase turnover. Employees need to see that previous surveys produced change before they invest effort in the next one.

Ignoring Qualitative Data

Focusing exclusively on quantitative scores while ignoring open-ended responses is a missed opportunity. The most operationally specific insights come from free-text fields, where employees describe hazards in their own words.

Build a structured process for qualitative analysis that includes:

- Thematic coding to group recurring concerns

- Frequency tracking of specific hazard mentions

- Flagging language that signals urgency or fear

Turning Survey Data into Measurable Safety Improvements

Data alone doesn't change behavior. What changes behavior is the consequence environment — specifically, whether safe behaviors are positively reinforced and whether raising safety concerns produces visible, valued outcomes. Survey results should be used to diagnose antecedents (conditions that trigger unsafe behavior) and consequences (what currently reinforces or discourages safe behavior) rather than simply documenting what is going wrong.

A practical continuous improvement loop looks like this:

- Measure — conduct survey

- Analyze — identify behavioral and cultural gaps

- Intervene — deploy targeted behavioral actions and reinforcement strategies

- Reinforce — acknowledge progress, celebrate improvements

- Re-measure — follow-up survey or pulse check

Treat this as an ongoing cycle. Most safety programs skip the reinforcement step — which is precisely why improvements fail to hold.

Aubrey Daniels International has spent over 45 years helping organizations apply Applied Behavior Analysis to safety performance. Their work — including Judy Agnew's co-authored book Safe by Accident? — focuses on identifying what behavioral consequences currently drive unsafe actions and building environments where safe behavior is genuinely reinforced rather than punished.

The results are measurable. A meta-analysis of behavior-based safety interventions found an average injury reduction of 26% in year one, climbing to 69% by year five.

Manager and supervisor behavior plays a specific role in sustaining these gains. When survey results are shared but no reinforcement follows — no recognition for teams that improved, no visible accountability for persistent hazard areas — the behavioral progress from any single survey cycle erodes quickly.

OSHA's recommended practices are direct on this point: acknowledge participation, reinforce it positively, and provide regular feedback so workers can see their concerns are being acted on.

Conclusion

Employee safety surveys are only as valuable as the organizational commitment behind them. Better survey design matters, but what drives real improvement is creating the behavioral and cultural conditions where survey data becomes a genuine, ongoing exchange between leadership and the workforce.

Evaluate your current survey process against these practices honestly: Are your surveys capturing the behavioral signals that matter? Is the data driving visible, documented change? If the answer to either is unclear, that gap is worth closing.

Organizations ready to build a behavior-based safety culture — not just improve survey processes — can draw on ADI's 45+ years of applying behavioral science to safety performance across manufacturing, mining, utilities, and beyond. Explore ADI's safety consulting services or reach out to learn how a behavioral science approach can strengthen your safety programs.

Frequently Asked Questions

What is an employee safety survey?

An employee safety survey is a structured measurement tool for assessing safety climate: employees' shared perceptions of safety norms, management commitment, and hazard reporting conditions. It differs from incident reporting systems or compliance audits by capturing leading indicators of safety performance.

How often should employee safety surveys be conducted?

Survey cadence depends on purpose: annual comprehensive surveys for cultural trend tracking, quarterly pulse surveys for targeted dimensions, and post-incident micro-surveys following near-misses. Over-surveying without follow-through degrades response quality and trust.

What are the most important questions to include in a safety survey?

The most predictive question categories include management commitment to safety, supervisor responsiveness to hazard reports, employee psychological safety in reporting concerns, and clarity of safety procedures. Established frameworks like OSHA's recommended practices and CPWR's 8 leading indicators provide validated starting points for each category.

What are the 7 basic safety rules?

Commonly cited universal safety rules include: follow procedures, use proper PPE, report hazards immediately, maintain a clean work area, never bypass safety devices, know emergency procedures, and participate in safety training.

How do you increase employee participation in safety surveys?

Three drivers of participation are guaranteed anonymity, visible action taken on previous survey results, and supervisor behavior that signals safety concerns are welcomed rather than punished.

What is the difference between safety climate and safety culture?

Safety climate refers to employees' current, measurable perceptions of safety norms and management behaviors at a given moment (what surveys capture). Safety culture refers to the deeper, longer-term shared values and assumptions about safety.